Supermicro NVIDIA HGX™ B300

AI GPU Clusters

Krambu is a Supermicro Channel Partner, providing direct access to Supermicro’s latest NVIDIA AI systems and GPU servers. Our collaboration ensures customers can deploy industry-leading AI infrastructure with optimized performance, efficiency, and scalability across Krambu’s advanced data-center platforms.

Direct access to Supermicro’s latest NVIDIA HGX B300 AI systems. Deploy industry-leading GPU infrastructure with Krambu.

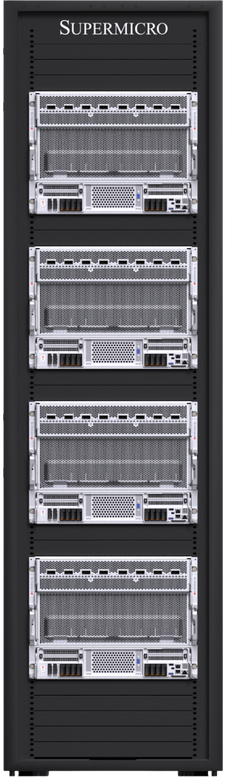

Integrated Rack Solutions

The Supermicro NVIDIA HGX platform is the building block of the world’s largest AI training clusters, delivering the immense computational output required for the transformative AI applications of today. Now featuring NVIDIA Blackwell Ultra, Supermicro’s NVIDIA HGX B300 solutions enable rapid deployment of the industry’s highest-performing AI infrastructure.

Liquid-Cooled B300 Systems

Supermicro NVIDIA HGX™ B300 8-GPU Systems, 4U Liquid-Cooled

Compute

- 8× SYS-422GS-NB3RT-LCC/ALC

- 72× NVIDIA B300 GPUs per rack

- 18.4 TB of HBM3e per rack

- NVIDIA GPUDirect RDMA & Storage / RoCE

Cooling

- Supermicro 250 kW capacity CDU

- Redundant PSU & dual hot-swap pumps

- Vertical Cooling Distribution Manifold

Networking

- Quantum-X800 InfiniBand or Spectrum-X Ethernet

- Up to 800 Gb/s compute fabric

- Non-blocking network architecture

- Out-of-band 1G/10G IPMI switch

Air-Cooled B300 Systems

Supermicro NVIDIA HGX™ B300 8-GPU Systems, 8U Air-Cooled

Compute

- 4× SYS-822GS-NB3RT or AS-8126GS-NB3RT

- 32× NVIDIA B300 GPUs per rack

- 9.2 TB of HBM3e per rack

- NVIDIA GPUDirect RDMA & Storage / RoCE

Cooling & Deployment

- Deploy in any standard data-center environment

- No facility water loop or CDU required

- Drop-in upgrade path for existing racks

Networking

- Quantum-X800 InfiniBand or Spectrum-X Ethernet

- Up to 800 Gb/s compute fabric

- Ethernet leaf switches (in-band mgmt)

- Out-of-band 1G/10G IPMI switch

Ready to Scale Your Infrastructure?

Whether you need GPU clusters, colocation, or custom server solutions — our team is ready to help design the perfect infrastructure for your needs.