Category: GPU

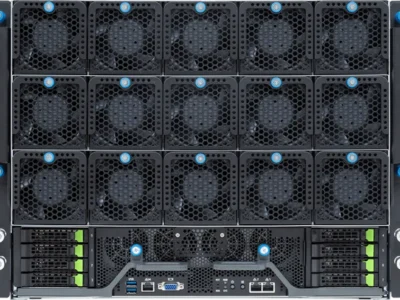

Supermicro SYS-522GA-NRT | 8x NVIDIA RTX PRO 6000 Blackwell PCIe Server

A versatile 5U air-cooled GPU server featuring 8 NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs with 768GB total GDDR7 memory, dual Intel Xeon 6952P processors (192 cores), 1.5TB DDR5-6400 ECC RAM, and dual-root PCIe 5.0 architecture. Purpose-built for AI inference, LLM fine-tuning, generative AI, 3D rendering, VDI, and HPC workloads requiring dense PCIe GPU compute with enterprise-grade reliability.

HPE Cray XD670 | 8x NVIDIA H100 SXM5 GPU Server

The HPE Cray XD670 is a 5U air-cooled GPU server featuring 8 NVIDIA H100 SXM5 80GB GPUs with 900 GB/s NVLink interconnect, dual Intel Xeon Platinum 8462Y+ processors, 2TB DDR5 ECC memory, and 3.2 Tb/s of InfiniBand NDR fabric bandwidth via 8 ConnectX-7 adapters. Purpose-built for large-scale AI training, LLM fine-tuning, generative AI, and HPC workloads requiring maximum GPU density and interconnect performance.

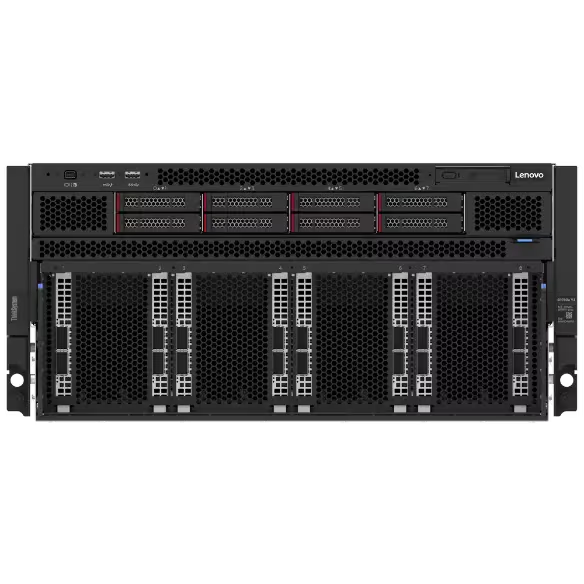

Lenovo ThinkSystem SR780a V3 | 8x NVIDIA HGX B200 Liquid-Cooled GPU Server

The Lenovo ThinkSystem SR780a V3 is a liquid-cooled 5U GPU server featuring 8 NVIDIA HGX B200 SXM6 GPUs with 1.44TB of HBM3e memory, dual Intel Xeon Platinum 8570 processors, 2TB DDR5-5600 ECC RAM, and 8x 400 Gb/s NDR InfiniBand networking via ConnectX-7. Purpose-built for large-scale AI training, LLM inference, generative AI, and HPC workloads requiring maximum compute density, GPU interconnect bandwidth, and energy-efficient liquid cooling.

Gigabyte G894-SD3-AAX7 | 8x NVIDIA HGX B300 GPU Server

A high-density air-cooled 8U AI server featuring 8 NVIDIA HGX B300 Blackwell Ultra GPUs with 2.3TB of HBM3e memory, dual Intel Xeon 6767P processors, 3TB DDR5 ECC memory, and 800 Gb/s OSFP networking. Purpose-built for large-scale AI training, LLM reasoning, generative AI, and HPC workloads requiring maximum compute density and interconnect bandwidth.

Supermicro SYS-A22GA-NBRT-G1 | 8x NVIDIA HGX B200 GPU Server

A quick-ship 10U AI server featuring 8 NVIDIA HGX B200 Blackwell SXM GPUs with 1.44TB of HBM3e memory, dual Intel Xeon 6960P processors (144 cores), 2TB DDR5-6400 ECC memory, and 400 Gb/s OSFP networking via ConnectX-7. Purpose-built for large-scale AI training, LLM reasoning, generative AI, and HPC workloads requiring maximum compute density and GPU interconnect bandwidth.

Supermicro SYS-822GS-NB3RT | 8x NVIDIA HGX B300 GPU Server

A high-density air-cooled 8U AI server featuring 8 NVIDIA HGX B300 Blackwell Ultra SXM GPUs with 2.3TB HBM3e, dual Intel Xeon 6767P processors, 3TB DDR5 ECC memory, and 8x 800 Gb/s ConnectX-8 SuperNIC networking. Purpose-built for large-scale AI training, LLM reasoning, generative AI, and HPC workloads requiring maximum compute density, interconnect bandwidth, and enterprise reliability.

Supermicro SYS-821GE-TNHR | 8x NVIDIA H100 HGX SXM5 GPU Server

The Supermicro SYS-821GE-TNHR is an air-cooled 8U GPU server featuring 8 NVIDIA H100 80GB SXM5 GPUs on the HGX platform with 900 GB/s NVLink per GPU, dual Intel Xeon 8570 processors (56 cores each), 2TB DDR5-5600 ECC memory, and 8x NDR 400 Gb/s OSFP networking via ConnectX-7. Purpose-built for large-scale AI training, LLM inference, generative AI, and HPC workloads requiring maximum GPU interconnect bandwidth and dense compute.

Supermicro AS-8126GS-TNMR | 8x AMD Instinct MI350X GPU Server

The Supermicro AS-8126GS-TNMR is an air-cooled 8U GPU server featuring 8 AMD Instinct MI350X OAM accelerators with 2.3TB total HBM3e memory, dual AMD EPYC 9575F processors (128 cores total), 2.25TB DDR5-6400 ECC memory, and 8x 400 Gb/s NDR InfiniBand/Ethernet OSFP networking via NVIDIA ConnectX-7. Purpose-built for large-scale AI training, LLM inference, generative AI, and HPC workloads requiring massive GPU memory capacity and high-bandwidth interconnect.

GPU SuperServer B300

A high-density liquid-cooled 8U AI server featuring 8 NVIDIA HGX B300 GPUs, dual Intel Xeon processors, DDR5 memory, and 800 GbE networking. Designed for large-scale AI training, LLMs, and HPC workloads requiring maximum performance and bandwidth.

Krambu HGX B300 DLC

Supermicro 4U GPU A+ Server

- Key Features/Applications: High Performance Computing, AI / Deep Learning

- CPU: Dual AMD EPYC™ 7003/7002 Series Processors

- Chassis: 4U Rackmount

- Drive: 6 Hot-swap 2.5″ NVMe drive bays or up to 10 U.2 NVMe 2.5″ drives available with an optional 4 drive bays at rear of system

- RAM: 32 DDR4 DIMM Slots

- GPU: Supports NVIDIA® HGX™ A100 8-GPU

- Network Ports: Flexible Networking Options

Need a Custom Configuration?

Our engineering team can design and deploy custom GPU clusters tailored to your specific AI workloads. From single nodes to full-rack solutions.