Description

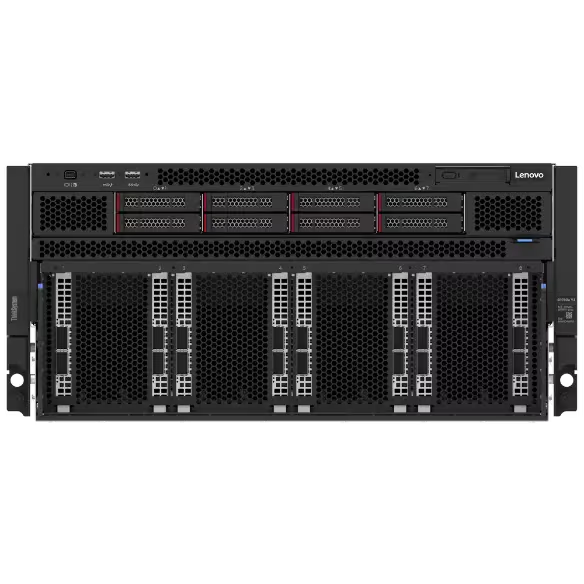

Accelerate your most demanding AI and HPC workloads with the Lenovo ThinkSystem SR780a V3, a liquid-cooled 5U GPU server built on the NVIDIA HGX B200 platform. Powered by 8 NVIDIA B200 SXM6 GPUs delivering 1.44TB of total HBM3e memory, dual Intel Xeon Platinum 8570 processors (56 cores, 2.1 GHz, 350W TDP each), and 2TB of DDR5-5600 ECC memory, this system is engineered for large-scale AI training, LLM inference, generative AI, and complex scientific computing. Lenovo Neptune direct liquid cooling keeps all eight 1000W GPUs and CPUs operating at sustained peak performance in a dense 5U footprint.

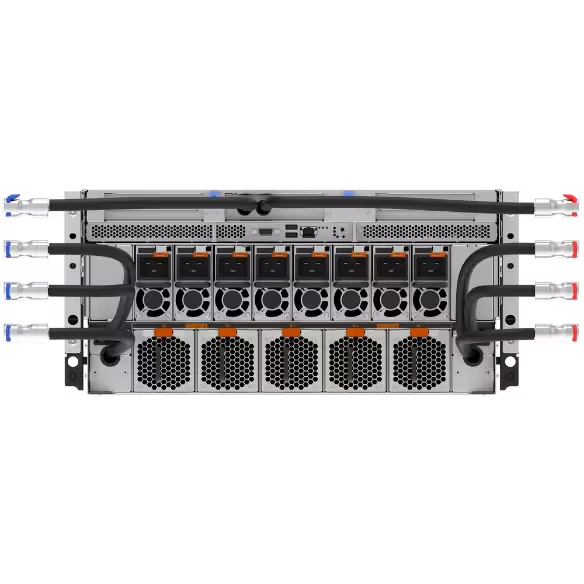

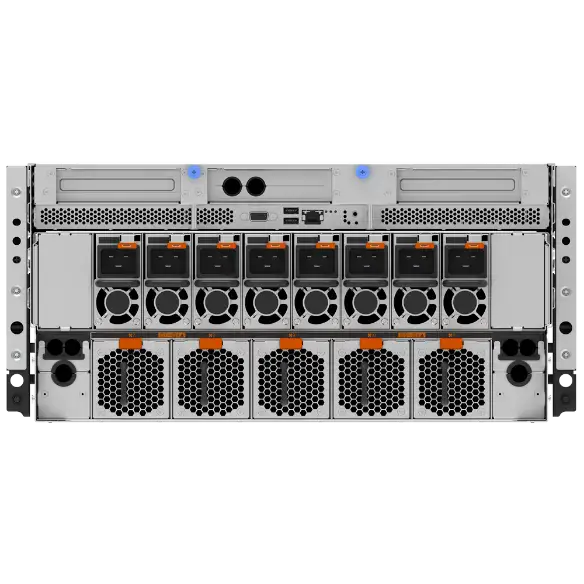

The SR780a V3 delivers exceptional GPU-to-GPU communication through fifth-generation NVLink and NVSwitch, providing 1.8 TB/s of bidirectional bandwidth per GPU for seamless scaling across all eight Blackwell GPUs during the largest model training and inference runs. Eight front-accessible NVIDIA ConnectX-7 NDR InfiniBand adapters provide 8x 400 Gb/s OSFP ports with GPU Direct RDMA support, enabling high-performance multi-node clustering and integration into large-scale AI fabrics. An additional Mellanox ConnectX-6 Dx dual-port 100GbE QSFP56 adapter handles dedicated management and storage networking.

With 30.72TB of high-speed NVMe storage across 8x Samsung PM1743 PCIe Gen5 U.3 drives, plus 1.92TB of M.2 NVMe boot storage, the SR780a V3 provides ample local capacity for datasets and checkpointing. Six 2600W 80 PLUS Titanium hot-swap power supplies in an N+1 redundant configuration with over-subscription ensure continuous uptime, while two rear PCIe Gen5 x16 risers offer additional expansion. Backed by a 3-year Premier OEM next-business-day warranty, this system is ready to deploy in production AI factories, neoclouds, and enterprise data centers.