Description

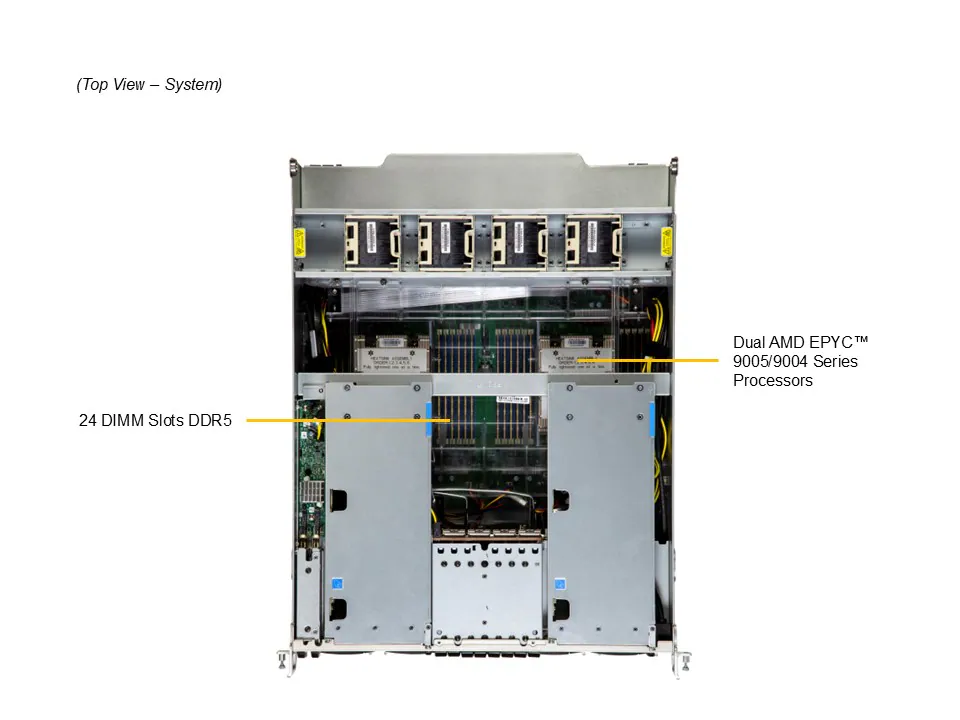

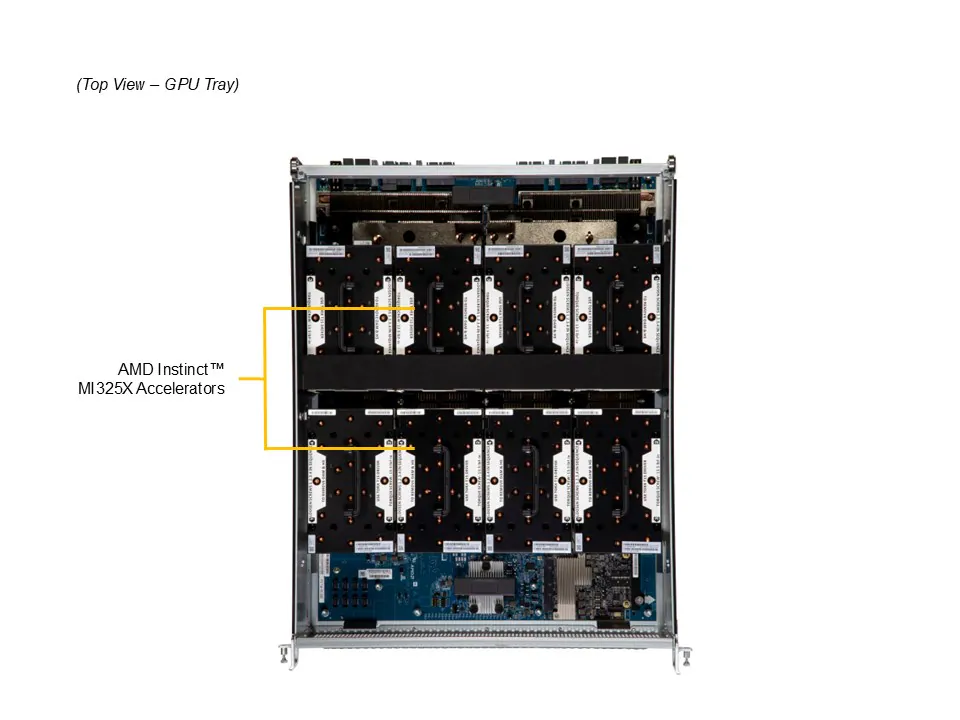

Accelerate your most demanding AI training, inference, and HPC workloads with the Supermicro AS-8126GS-TNMR, an 8U GPU server built on the AMD Instinct MI350X platform. Powered by 8 AMD Instinct MI350X OAM accelerators on a UBB 2.0 baseboard, dual AMD EPYC 9575F processors (64 cores, 5.0 GHz boost), and 2.25TB of DDR5-6400 ECC memory, this system delivers exceptional compute density for large-scale AI model training, LLM inference, generative AI, and scientific computing. Each MI350X GPU features 288GB of HBM3e memory with 8 TB/s bandwidth, providing a total of 2.3TB of coherent GPU memory across the platform — enough to run 520B+ parameter models without complex sharding.

The AS-8126GS-TNMR provides high-bandwidth GPU-to-GPU communication via 4th Gen AMD Infinity Fabric with a fully meshed 8-GPU topology, delivering 7x 153.6 GB/s bidirectional links per GPU for fast, low-latency data movement across all accelerators. For multi-node scaling, 8 NVIDIA ConnectX-7 adapters deliver 8x 400 Gb/s NDR InfiniBand or Ethernet connectivity via OSFP ports over PCIe Gen5 x16, enabling seamless integration into large-scale AI fabrics and supercomputing clusters with 1:1 GPU-to-network ratio. An additional Mellanox ConnectX-6 Dx dual-port 100GbE QSFP56 NIC handles dedicated management and storage traffic, while onboard dual 10GbE RJ45 ports and a dedicated BMC/IPMI port provide out-of-band management.

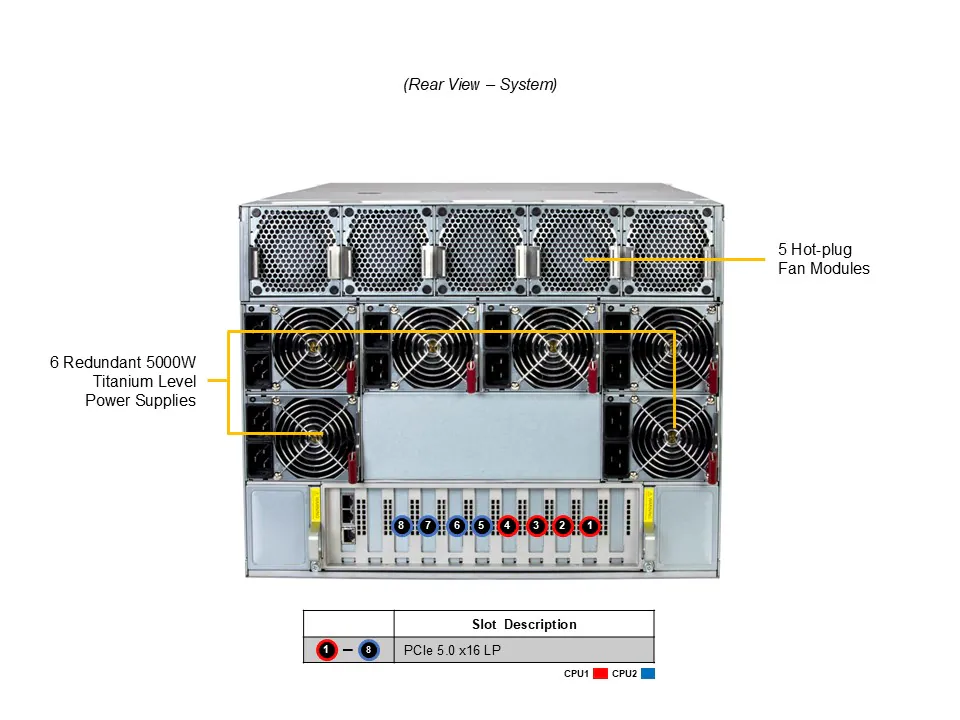

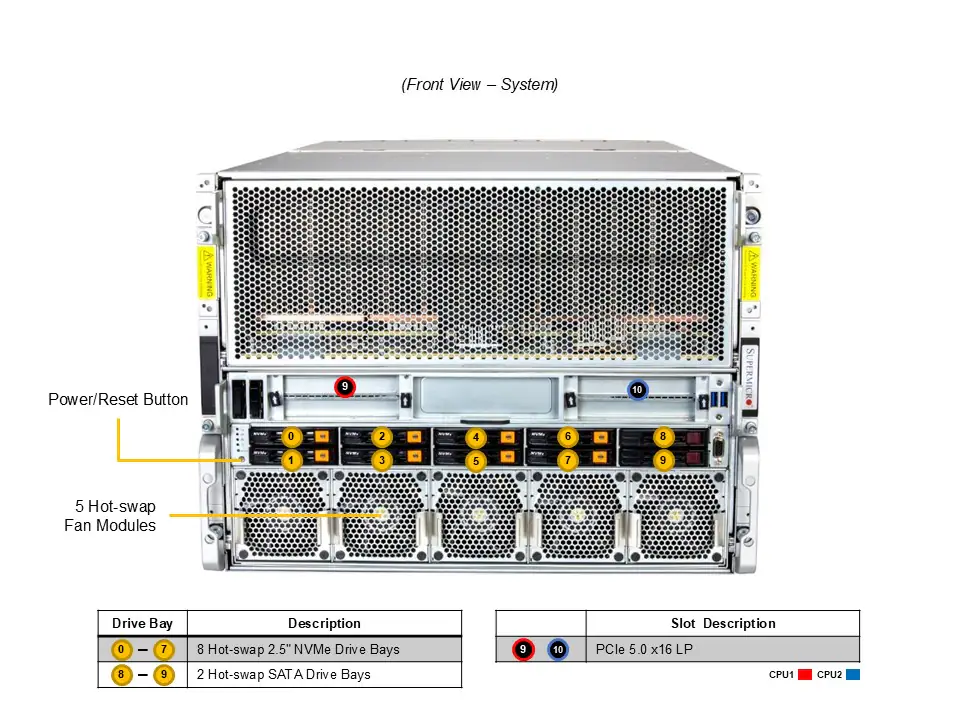

With 30.4TB of raw NVMe storage across 8 hot-swap 2.5″ PCIe Gen4 bays, a 960GB M.2 NVMe boot drive, 6x 5,250W Titanium-efficiency power supplies in a 3+3 redundant configuration, and 10 PCIe 5.0 expansion slots, the AS-8126GS-TNMR provides a dense, air-cooled, and scalable foundation for production AI infrastructure. Backed by a 3-year limited warranty with 3-year onsite next-business-day service, this system is ready to deploy in AI factories, neoclouds, research labs, and enterprise data centers.